Last updated: January 2026

In this lesson, you will learn what OpenShift is, why OpenShift is important and Openshift architecture.

EX280 Exam Practice Questions & Answers (2026 & above )

Introduction

OpenShift is Red Hat’s enterprise Kubernetes platform designed to simplify containerized application development, deployment, and management at scale. Built on top of Kubernetes, OpenShift adds powerful enterprise features such as enhanced security, developer tooling, CI/CD integration, and multi‑cloud support.

Since its early days, OpenShift has evolved significantly. In 2026, it is widely adopted by enterprises for building secure, scalable, and cloud‑native applications across on‑premises, public cloud, and hybrid environments.

Why OpenShift

If you are familiar with Docker, Podman, or some other container runtime, you will understand that these container runtimes can actually be used to create and run containers. In other words, these container runtime can be used to run containerized applications, which is fine.

However, when it comes to automation, orchestration, or management of these containerized applications, especially when there are hundreds or thousands of them, it is never easy and is often impossible to use these tools, especially now that heavy applications like core banking applications are being containerized.

Hence the need for an orchestration, automation, and extremely high availability tool that can manage hundreds of thousands of containerized applications. One of those tools is what we are about to learn about now, which is OpenShift.

What Is OpenShift

OpenShift is a container application platform. Just like Kubernetes, Openshift platform orchestrates and manages containerized applications and can be used to easily scale up applications as required.

OpenShift was developed by Red Hat. The Openshift cluster is built on top of the Kubernetes cluster and the Red Hat Core Operating System. It is compatible with Kubernetes, and because it is compatible with Kubernetes and built on top of it, it can be managed the same way with Kubernetes cluster, but this time around, by using Open-Shift management tools.

So permit me to say that OpenShift is just like a distribution of Kubernetes.

Before we look at the Openshift architecture, let’s understand some of the Kubernetes components or objects because, the OpenShift is built on top of Kubernetes. Let’s talk about the Kubernetes objects.

Please click here to see the kubernetes objects you need to understand.

OpenShift Architecture

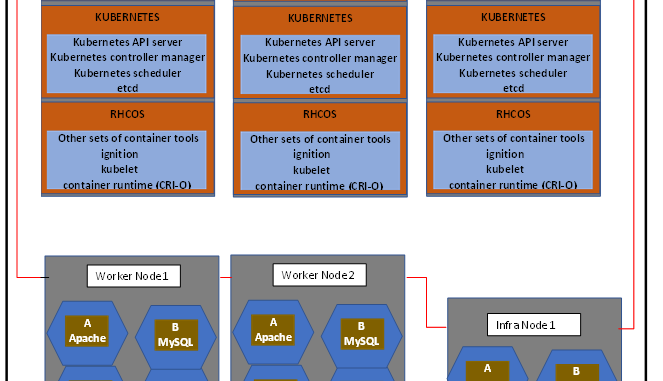

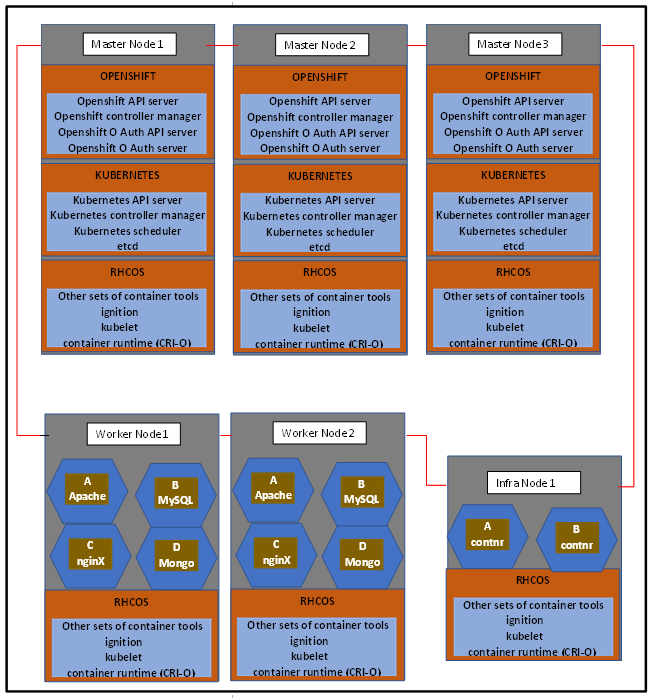

The OpenShift container platform, or rather, the Openshift cluster, consists of some number of nodes joined or clustered together as seen in the OpenShift Architecture diagram above. After all, that’s the meaning of cluster: the joining together of components or features. In Open-shift, we basically have the master nodes and the worker nodes, and in addition, we also have the infra node.

The master node, which is also called the control plane, provides the basic services that manage the Open-shift cluster. And the worker nodes, also called the complete nodes, are where your application resides. Apparently, since the worker node holds the work load, the worker node resources, that is, server resources would have more resources than the master node.

The infra node, meaning infrastructure node, is used to host infrastructure services such as monitoring, logging, etc. So, in an OpenShift cluster, there must be a minimum of at least three control planes and a minimum of two worker nodes.

Now let’s talk about the services, features and layers that must be present in these nodes.

1. Red Hat Core Operating System (RHCOS)

The first thing that you must install on every node is the Red Hat Core Operating System (RHCOS). The Red Hat Core Operating System is a container-optimized OS and it consists of the CRIO container runtime. This is the Kubernetes native container runtime, which has replaced the Docker container runtime used in older versions of OpenShift. And, of course, the container runtime is basically used to run the containers in the cluster.

The Red Hat Core Operating System also consists of the kubelet service. The kubelet service interfaces with the container runtime and the node, and the kubelet service is responsible for starting and running the pod. It can also assign resources from the node to the pod. Another component or another feature that the RHCOS consists of is the ignition.

The OpenShift cluster uses ignition as a first boot system configuration to bring up and configure the cluster. The Red Hat Core Operating System also consists of some other sets of container tools. In the previous version of Openshift Cluster, the Red Hat Enterprise Linux Operating System( RHEL) could be used as the base operating system, but for the newer versions, the RHCOS must be used for both the control plane and the compute nodes.

The Red Hat Core Operating System is immutable, meaning that you do not have any need to manage the operating system, unlike with Red Hat Enterprise Linux, where you will still have to manage the operating system. Hence, Red Hat has adopted the use of the RHCOS over the RHEL operating system.

2. Kubernetes Layer

On top of the Red Hat Core operating system, we have the Kubernetes layer. Don’t forget that Open-shift is built on top of Kubernetes and the Red Hat Core Operating System. The Kubernetes layer consists of the Kubernetes services such as the Kubernetes API, the Kubernetes controller manager, the Kubernetes scheduler, etcd and so on. If you have seen my Kubernetes course, you will automatically understand the responsibilities of these services. But for those who haven’t seen the course, I will explain.

*The Kubernetes API* is like a door to the Kubernetes cluster. Before you can interact with Kubernetes, you need to come in through the Kubernetes API. The Kubernetes API then validates the credentials and does the authentication into the Kubernetes cluster

*The scheduler* is very intelligent. It schedules which node the cluster component (for instance, a pod) will be created on. It knows the right node to schedule pods on, and it does this automatically by default.

*The etcd* is like the brainpower of the cluster. Every cluster operation, apart from the workload operations, are stored in the etcd as logs in the YAML format.

*The controller manager* is responsible for controlling the Kubernetes operations in the cluster. The controller manager watches the etcd for any changes and knows what the desired state of your cluster should look like.

If the cluster is not in its desired state, the controller manager uses the API to enforce the desired state. For example, if a pod dies, the controller manager signals the scheduler for the pod to be rescheduled and recreated, and then the scheduler signals the kubelet to recreate and start the pod.

3. OpenShift Layer

On top of Kubernetes, we also have the OpenShift layer. The OpenShift layer consists of the OpenShift API server, the OpenShift Controller Manager, the OpenShift O Auth API server, and the OpenShift O Auth server. The OpenShift layer also consists of some other services, but these are the ones we need to start with, at least to get going with OpenShift.

*The OpenShift API server*. For this service, the Kubernetes API server will talk to the Openshift API server so that it will proxy requests to the Openshift API server. It validates and configures data for OpenShift resources such as templates, projects, routes, etc.

*The OpenShift controller manager* is responsible for making sure that the OpenShift cluster object is in its desired state. It watches the etcd for changes in OpenShift objects and then uses the API to enforce the specified state in the event that there is a difference in the desired state.

*The OpenShift O Auth* is responsible for integrating a method of external authentication. It will proxy external requests back to the OpenShift API server. For instance, users request tokens from the OpenShift O Auth server to authenticate themselves to the API. The OpenShift O Auth API server is responsible for validating and configuring data to authenticate to the OpenShift container platform, such as users, groups, and O Auth tokens.

Having understood the OpenShift architecture, in the next lesson ,we are going to look at how to install the OpenShift cluster. Thank you for reading. Bye for now.

OpenShift Editions

OpenShift is available in multiple editions:

- Red Hat OpenShift Container Platform (OCP): self‑managed, on‑prem or cloud

- ROSA (Red Hat OpenShift on AWS): managed OpenShift on AWS

- ARO (Azure Red Hat OpenShift): managed OpenShift on Azure

- OpenShift Local (CRC): local development and testing

With these editions, you can decide how your OpenShift Architecture would be based on platforms .

OpenShift vs Kubernetes (Quick Comparison)

| Feature | Kubernetes | OpenShift |

|---|---|---|

| Installation | Manual / complex | Automated with operators |

| Security | User‑configured | Secure by default |

| Upgrades | Manual | Automated |

| CI/CD | External tools | Built‑in (Tekton, GitOps) |

| Enterprise Support | Optional | Included |

Common OpenShift Use Cases (2026)

OpenShift is commonly used for:

- Enterprise microservices platforms

- Hybrid and multi‑cloud deployments

- CI/CD pipelines and DevOps automation

- Modernizing legacy applications

- Highly regulated environments (finance, healthcare, telecoms)

Frequently Asked Questions (FAQ)

Is OpenShift better than Kubernetes?

OpenShift is not a replacement but an enterprise‑ready Kubernetes distribution with added security, automation, and tooling.

Can OpenShift run on‑prem and in the cloud?

Yes. OpenShift supports on‑premises, public cloud, private cloud, and hybrid environments.

Is OpenShift suitable for small teams?

It can be, but OpenShift is best suited for organizations that need enterprise‑grade features and support.

Want more OpenShift tutorials? Explore additional guides on TekNeed covering deployments, routes, security, and real‑world production scenarios. – OpenShift Architecture

Click To Watch Video On Introduction To Openshift – OpenShift Architecture

OpenShift Architecture

EX280 Exam Practice Questions (2026 & Above)

Are you preparing for the Red Hat EX280, EX294 or RHCSA exam?

We have put together a comprehensive set of practice questions designed specifically for OpenShift and Linux professionals.

Why use these practice questions?

- Realistic scenarios aligned with the 2026 exam objectives

- Focused on OpenShift architecture, deployments, and troubleshooting

- Boosts confidence and readiness for the actual exam

Tip: Review this section after studying the OpenShift concepts above, applying theory to practice will help you retain information and succeed on the exam.

Click the link Below to access

You can also explore our other (OpenShift) Exam Practice Questions / Preparation Questions such as:

EX380 Exam Practice Questions, EX480 Exam Practice Questions, EX316 Exam Practice Questions, EX374 Exam Practice Questions, EX467 Exam Practice Questions, EX288 Exam Practice Questions, CKA Exam Practice Questions, CKAD Exam Practice Questions, EX267 Exam Practice Questions, EX188 Exam Practice Questions, etc.

Your feedback is welcomed. If you love others, you will share with others

Hello

Pls can you help install openshift in the enterprise. Do lets talk